Generative Engine Optimization Explained: A 2026 Guide for Content Teams

TL;DR: Generative engine optimization (GEO) is the practice of shaping content so AI systems like ChatGPT, Perplexity and Google AI Overviews cite it in their answers. The tactics overlap with traditional SEO but reward clarity, citations and topical depth over backlinks alone. A Princeton and Georgia Tech study published at ACM KDD 2024 found that adding statistics, citing sources and including quotations boosts AI visibility by up to 40 percent. According to Search Engine Land’s August 2025 data, AI-referred sessions grew 527 percent year over year. GEO is no longer optional.

You already know what SEO is. You have probably spent years trying to rank in the ten blue links. Then Google started answering questions itself and ChatGPT started sending traffic that converts at rates traditional organic visitors rarely match.

The rules changed. Not completely, but enough to matter. GEO is the discipline that addresses the gap. This guide covers what it is, how the three main AI platforms source citations differently and the specific content moves that increase the chances a model quotes your site instead of someone else’s.

What GEO Actually Is (and What It Is Not)

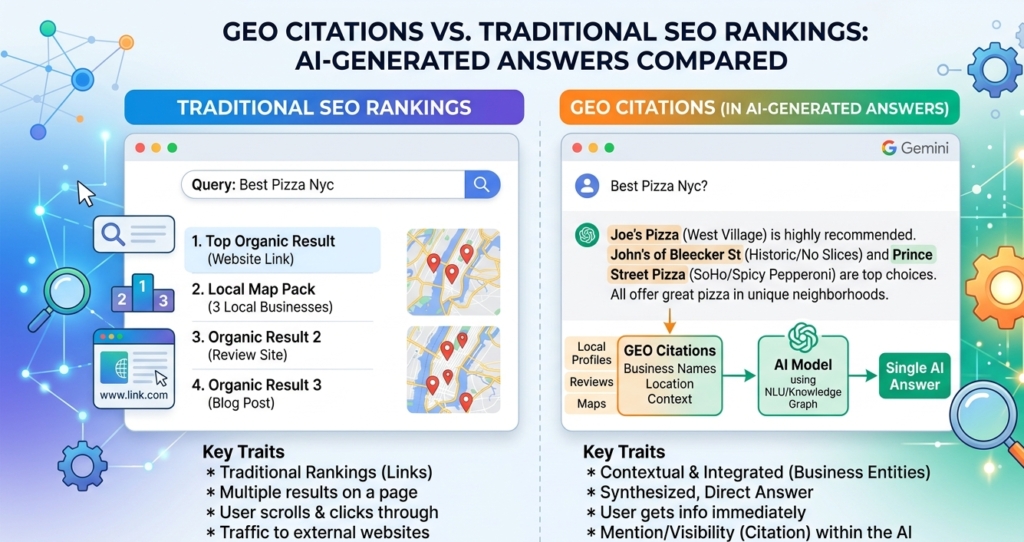

Generative engine optimization is the practice of structuring content so AI answer engines pull from it and cite it in responses. That definition matters because it draws a clear line between ranking in a list and being the source inside an answer. One delivers a link. The other delivers trust at the moment someone is forming a decision.

GEO is not a magic trick and it is not a replacement for foundational SEO. A page that is not crawlable does not get cited. A domain with no authority rarely breaks into AI answers for competitive queries. What GEO does is give well-built pages the structural signals that tip the balance toward being quoted rather than skipped.

It is also not keyword stuffing with new vocabulary. The Princeton and Georgia Tech team that coined the term tested nine content modification strategies across 10,000 queries. Keyword stuffing ranked last and performed about 10 percent worse than the control. Adding statistics, citing sources and including quotations scored highest. That is the core of GEO in one finding.

Why SEO and GEO Are Converging in 2026

The two disciplines are converging because they share most of the same foundation. Crawlable pages, clear structure, strong E-E-A-T signals and factual content help you rank in Google and help you get cited in AI answers.

What separates GEO from pure SEO is what sits on top of that foundation. The goal in SEO is a ranking position. The goal in GEO is a citation inside a generated answer, which often comes from a page that does not rank in the top ten at all.

According to Ahrefs’ September 2025 study of 9.6 million ChatGPT queries, the top cited domains in ChatGPT for US queries are Reddit, Wikipedia, Amazon, Forbes and Business Insider. A separate Ahrefs study from August 2025 found that only 12 percent of URLs cited by ChatGPT, Perplexity and Copilot even rank in Google’s top 10 for the same query. That gap is the entire reason GEO exists as its own discipline.

The practical takeaway: your 2026 content strategy needs to hit both targets. Rank where you can. Structure for citation everywhere else.

The Six Signals AI Systems Use to Pick Citations

AI systems consistently favor content that meets a set of structural and credibility signals. Getting even three or four of these right on a page measurably shifts your citation probability.

Semantic completeness is the biggest one. AI systems evaluate whether your page answers the full question, not just the surface-level keyword. A page that covers a topic from three angles outperforms a page that covers one angle deeply.

Front-loaded answers matter because AI pulls from the first third of most content. According to Growth Memo’s February 2026 analysis, 44.2 percent of all LLM citations come from the first 30 percent of a piece of text. Put your best answer at the top, not buried in paragraph six.

Schema markup helps AI understand what type of content a page contains. FAQ schema, Article schema and How-to schema all give structured signals about the content’s intent. SE Ranking’s November 2025 data found that FAQ sections in content correlate with 4.9 average AI citations compared to 4.4 for pages without them.

Freshness plays a stronger role than many people expect. SE Ranking found that pages updated within two months earn an average of 5.0 citations compared to 3.9 for pages older than two years.

Entity density refers to how many named, verifiable things your page references. Real tools, named researchers, specific organizations and sourced statistics all signal that a page is grounded in the real world. The Princeton GEO study found that adding statistics to content alone drove a 41 percent improvement in AI visibility.

Crawler access is the unsexy baseline. A page behind a login, blocked by robots.txt or buried behind five redirects does not get into the citation pool. Check Google Search Central’s guidance on AI Overviews to confirm your pages are accessible to Googlebot and the crawlers AI systems depend on.

How ChatGPT, Perplexity and Google AI Overviews Differ in Sourcing

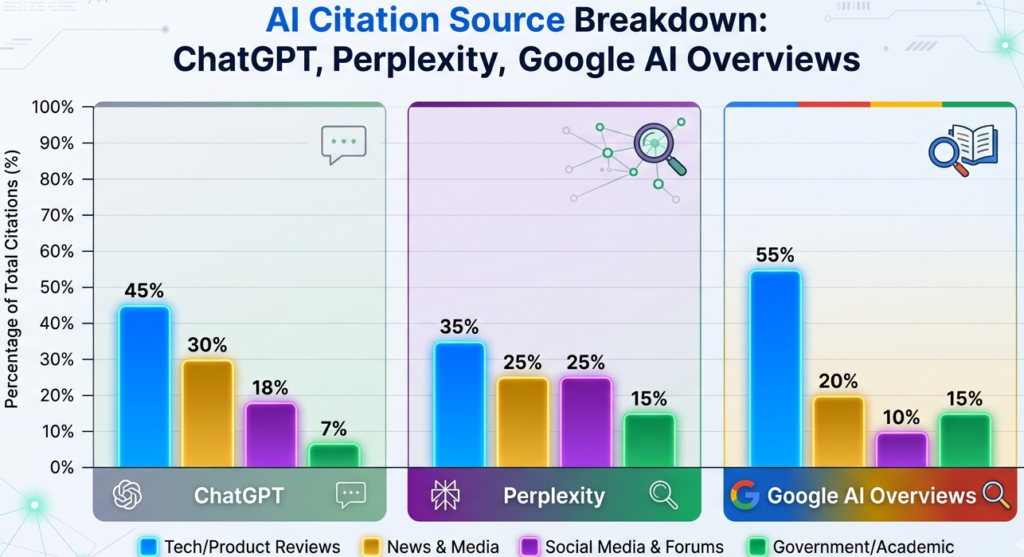

The three major AI surfaces do not pull from the same place. Treating them as one system means optimizing for none of them well.

According to Profound’s analysis of 30 million citations from August 2024 to June 2025, Wikipedia accounts for 47.9 percent of ChatGPT’s top 10 citations. Reddit follows at 11.3 percent. This tells you that ChatGPT strongly rewards encyclopedic, structured, consensus-based content. It trusts the format of reference material.

Perplexity behaves differently. In the same Profound dataset, Reddit accounts for 46.7 percent of Perplexity’s top 10 citations. Perplexity leans heavily on real, recent community discussion. This is why forum-style questions and community discussions rank well there, and why purely corporate content often underperforms.

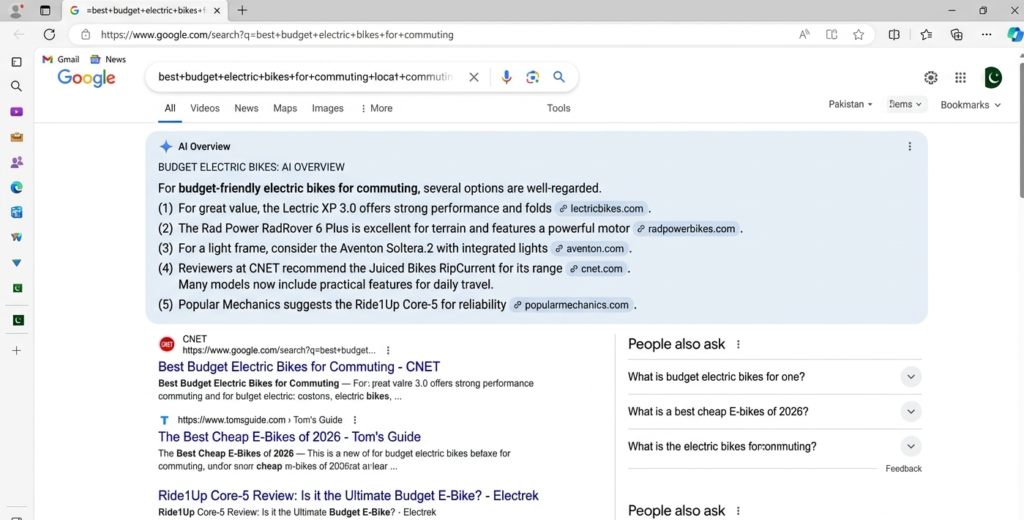

Google AI Overviews show a more distributed pattern. Reddit leads at 21 percent of top citations, with YouTube close at 18.8 percent. Quora and LinkedIn both appear in the top five. Semrush’s analysis of over 10 million keywords found that question-formatted queries starting with “how” and “what” trigger AI Overviews most often, and that AI Overviews appeared in 25 percent of all Google queries during July 2025 before stabilizing around 15.7 percent in November.

The practical split for content teams looks like this:

| Platform | Top Citation Source | What It Rewards |

| ChatGPT | Wikipedia (47.9%) | Structured, factual, encyclopedic content |

| Perplexity | Reddit (46.7%) | Community discussion, real-world experience |

| Google AI Overviews | Reddit (21%), YouTube (18.8%) | Authority, question-format, multimedia presence |

No single content type wins all three. Spreading your presence across original written content, community platforms and video gives you the broadest citation footprint.

Seven Content Structure Changes That Boost Citation Odds

These are practical edits, not complete rewrites. Most can be applied to existing pages in a single pass.

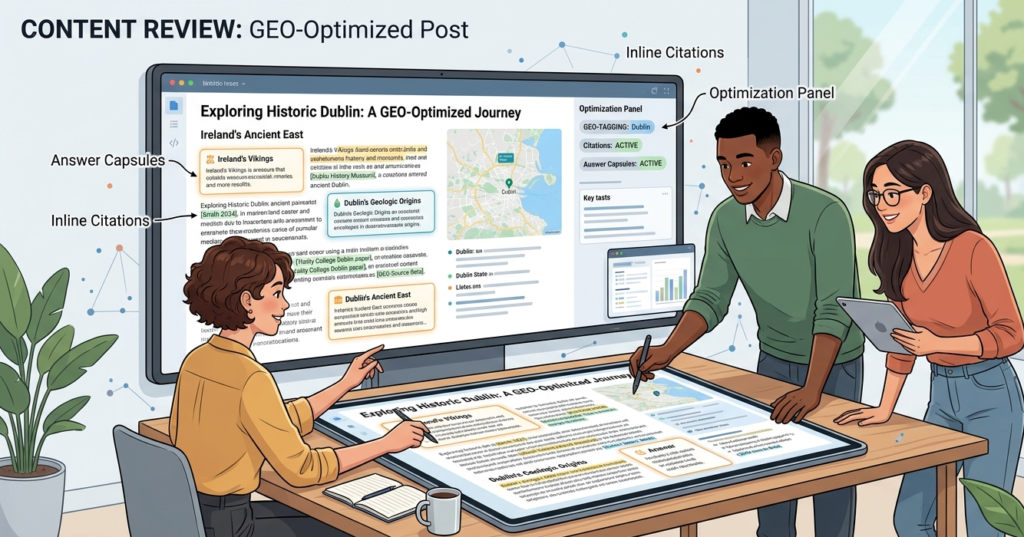

1. Lead every section with a direct answer capsule. Write a 40 to 60 word paragraph at the top of each H2 that answers the section question in full sentences without setup. AI pulls these verbatim or near-verbatim. This is the single highest-return change you can make.

2. Add at least one named statistic every 200 words. The Princeton and Georgia Tech study confirmed statistics addition as the strongest single GEO signal, improving AI visibility by 41 percent. Use real data with real sources, not estimates dressed as facts.

3. Use question-based H2 headings. “How does Google AI Overviews select citations?” outperforms “Citation Selection.” Questions match the query format AI systems see from users.

4. Break content into chunks of 150 to 300 words. Each chunk should stand alone as a coherent answer. AI systems extract passages, not entire pages. A chunk that requires three paragraphs of context to make sense will not be extracted cleanly.

5. Add schema markup. FAQ schema and Article schema signal content intent to both Google and the AI systems that rely on Google’s index. SE Ranking’s November 2025 study confirmed FAQ sections consistently correlate with higher citation counts, though the markup alone is not the driver. The structure is.

6. Cite named sources inline. Every claim that can be attributed to a named study, organization or named professional should carry that attribution in the text itself, not in a footnote. AI systems read inline citations as credibility signals.

7. Update old pages regularly. Freshness is a measurable factor. A quick update pass that adds new statistics and confirms current accuracy resets the freshness signal for AI systems. According to SE Ranking, pages updated within two months earn about 28 percent more citations than pages older than two years.

If you want to track whether your updates are working, the posts on AI search monitoring platforms and the best AI search monitoring tools for 2026 cover what to watch and what tools actually report citation data accurately.

How to Test If Your Content Is GEO Ready

Testing does not require expensive tools. It requires honest questions about your own page.

Start with the extraction test. Paste one of your H2 sections into ChatGPT and ask: “What is the direct answer to [your H2 question]?” If ChatGPT cannot pull a clean answer from your text, neither will an AI Overview. The answer needs to live in the first two sentences under that heading.

Run the entity test next. Count how many named tools, organizations, researchers or studies appear in your article. Pages with 15 or more named entities have significantly higher AI citation probability based on practitioner data collected through 2025. If your count is below eight, add sourced data points.

Check your crawl access. Open Google Search Console and confirm the page is indexed and not flagged for any crawl errors. Check that your robots.txt is not blocking the crawlers AI systems use. This is basic but easy to miss after site migrations.

Finally, ask whether your page answers the question or introduces it. Many pages spend 200 words setting up the question before getting to the answer. AI does not reward setup. It rewards extraction. If your answer starts in paragraph four, move it to paragraph one.

For a broader look at how to deploy tools that monitor your GEO performance, the guide on AI search optimization tools for smarter SEO walks through the specific platforms worth tracking in 2026. You can also work with someone who builds content with these signals from the first draft by checking the SEO and content writing services page.

Want GEO-Optimized Content Built for Citation From the First Draft?

Most content agencies write for clicks. Writing for citation requires a different set of structural habits: answer capsules in every section, named sources throughout, schema-ready formatting and freshness planning built into the editorial calendar. If you want content built that way from day one, the SEO and content writing services page covers what that looks like in practice. Or if you want to talk through your specific content gaps first, you can start with a discovery call. Either way the conversation is about your goals, not a pitch.

FAQs

Is GEO the same as SEO?

They overlap heavily but are not identical. Traditional SEO targets positions in the ranked list of links. GEO targets inclusion inside AI-generated answers, which often cite pages that rank well below the top ten. The Princeton and Georgia Tech study published at ACM KDD 2024 found that adding statistics, source citations and quotations improved AI visibility by up to 40 percent across 10,000 queries. That is GEO in practice. The same changes tend to improve featured snippet and People Also Ask appearances in traditional search too, which is why the two disciplines are converging.

Do I need an llms.txt file to rank in AI answers?

No. Google’s John Mueller confirmed in 2025 that you do not need a bot-only markdown version of your pages. What you do need is clean technical SEO: pages that crawlers can access, schema that clarifies content type and structure that allows passage-level extraction. The time spent building an llms.txt file is better spent on answer capsules and entity-rich content.

What content types get cited most by AI systems?

According to Semrush’s study of 200,000 AI Overview queries, question-format content using “how,” “what” and “is” triggers AI Overviews most consistently. Wix’s March 2026 analysis of 1.9 million citations found listicles at 21.9 percent, articles at 16.7 percent and product pages at 13.7 percent as the most common citation types across AI Mode, ChatGPT and Perplexity. Pages with citable statistics and clear answer capsules appear across all three platforms.

How long does GEO take to produce measurable results?

Faster than traditional SEO, based on current practitioner data. Structural improvements like adding answer capsules and statistics can produce measurable citation lifts within 30 days because AI systems re-crawl and reassess content regularly. Freshness is an active ranking signal. That said, building consistent AI citation authority in a competitive niche still takes months of sustained output.

Can a small site compete with Wikipedia and Reddit for AI citations?

Not directly. Both domains have backlink profiles and brand mention volumes that small sites cannot match. The real opportunity is in what researchers call “fan-out” queries. When a user asks a broad question, AI systems spin off dozens of sub-queries to fill in the answer. Small sites with niche authority can appear in those sub-queries even when they cannot compete for the parent query. That is the GEO entry point for newer domains.